Why a Blog? AI is Changing Analytics

Why a blog?

I am not a blogger or influencer, and my social media follower count hasn’t changed in years. I wanted to start recording my thoughts because we are in the middle of a monumental shift in technology. I’m a Product Manager at Nirvana Insurance and an MBA student at Indiana University’s Kelley School of Business, and I’m watching AI change the day-to-day reality of insurance analytics in real time.

I’ve been in data analytics for over a decade at Progressive, Capital One and now Nirvana, and my work has changed more in the last six months than the ten years before it. Software design and data science have been areas I’ve relied on heavily technical teams to execute in my workflows, with built-in delays from resourcing, prioritization, and time zones. I’m good enough with Python and scikit-learn to whip up an average model, but as a PM, that usually isn’t my day job.

But things are different now.

AI agent workflows let me build an endless “team” that already has these capabilities. The limits of software and model design have shifted, they’re now more about ideas than developer bandwidth. Cursor, Claude Code, and Antigravity have written far more lines of code for me this year than I wrote in the decade before. Analysis has become a totally different process, I use Cursor to build software to accomplish what I would have once done manually with piles of SQL functions, Excel formulas, and pivot calculations.

Quick examples

Just in the last few months, I’ve built things that would have been unrealistic for me to do solo before, or would have taken ages of Googling StackOverflow and Reddit, plus hours of meetings with engineers or data scientists.

One example is using Cursor to quickly develop API-connected software that stitches together Google Sheets, Snowflake data, and local Python models. This used to be the kind of work where I could outline the requirements, then wait on bandwidth, handoffs, and time zones. Now I can prototype the system end to end, iterate on it fast, and then bring engineering in for hardening instead of starting from scratch.

Another is building more advanced modeling than I typically touch in my day job. I’ve been able to develop XGBoost models, run proper hyperparameter tuning, and set up repeatable evaluation so I’m not just “trying settings” and hoping it works. Even when I’m not the one pushing the final model to production, I can finally explore the solution space myself and show up with something concrete.

And a big one has been document work. I’ve used AI to parse large-scale documents and turn them into usable, structured data. Previously, that would have been painfully manual or would have required building a one-off pipeline that I’d never have time to maintain. Now it’s a workflow I can stand up quickly, test, and refine.

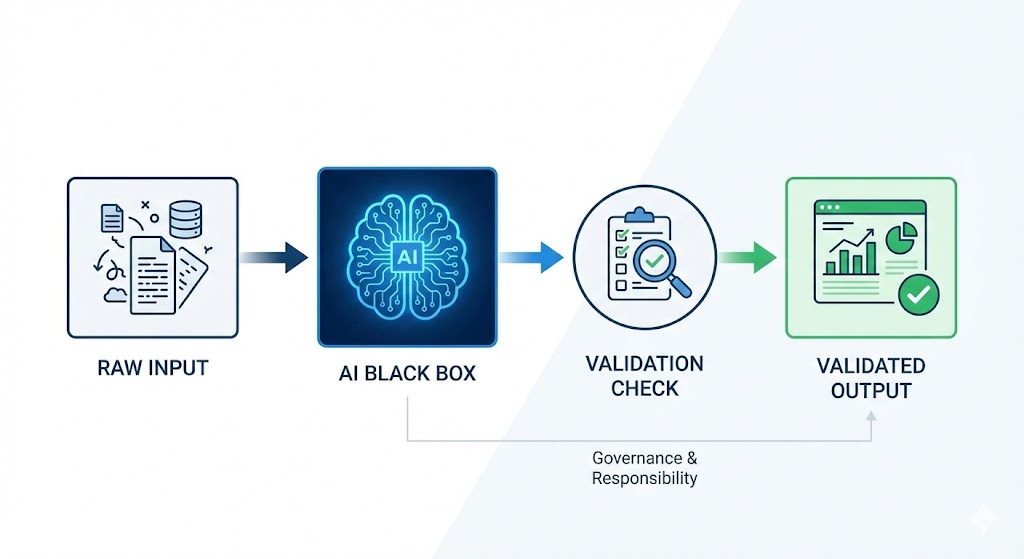

None of these are magical. They still require careful scoping, quality control, and a lot of judgment. But the pace is different. The bottleneck has shifted from “can we build it” to “how do we validate it and scale it responsibly.”

What I mean by “agents”

When I say “AI agents,” I’m using the term in two ways.

The more precise definition is code that uses large language models (LLMs) inside a workflow, where the model is one component in a structured system. The looser definition, and the one I use day to day, is AI-powered automation through tools like Cursor, where I’m delegating chunks of work to an assistant that can draft, refactor, test, and iterate quickly. It’s not a perfect definition, but it’s close enough to describe how it changes my workflow.

There are new struggles in analytics, though. This newfound speed, plus being one step removed from the ETL process, means I’m sometimes less familiar with the underlying data. That makes quality control even more important than it used to be. How we use engineers and data scientists is changing rapidly too. Instead of spending a big chunk of collaboration time on building software or structuring model-ready data, there’s more need for things like advanced unit testing, evaluation harnesses, monitoring, and hyperparameter tuning.

My rules so far

Data analytics still needs to be spot-checked. Speed is amazing, but speed also lets you get to the wrong answer faster.

My baseline rule is to always have a “before and after” for the LLM. That means being explicit about what the input will look like, what the output should look like, and what sanity checks must pass. Magnitude checks, trend checks, and “does this align with what we already know” checks matter more than ever. Sometimes the best value the model provides is not the output itself, but the clarity it forces around definitions, assumptions, and edge cases.

And these rules are constantly changing. As different models improve, the failure modes change too. The playbook is not static, it has to evolve with the tools.

What happens next

The next set of constraints is less about what we can build, and more about how we build responsibly and sustainably.

Governance and evaluation become huge. If workflows can be created and iterated rapidly, we need equally strong ways to validate them, monitor them, and understand when they drift. And then there’s change management. New processes can be developed faster than manual teams may want to, or be able to, adjust. The technology curve is steep, but organizational adoption rarely matches it.

When ChatGPT made waves in November 2022, there was a prominent fear that “AI was coming for our jobs.” In my world of product management, I think it will be quite a while until that’s realistic. The work is too distinct, the local knowledge is too diverse, and execution has too many moving pieces. One day it might be possible, but for now I believe AI will do something more practical, it will let us focus on the harder problems, not eliminate the role.

This blog will focus on my journey with these tools, learning in real time and figuring out how they can 10x my capabilities. I’ll write about what’s working and what’s failing as I use tools like Cursor and Claude Code to rebuild parts of my analytics workflow, with real examples from insurance product management and data analytics.