PMs Should Not Be Building Actuarial Models. Except When They Should.

PMs Should Not Be Building Actuarial Models. Except When They Should.

I have been experimenting with something that feels slightly illegal in the traditional insurance org chart. I am a product manager, not an actuary, and not a senior data scientist. Yet I have been running an XGBoost modeling framework against actuarial-style problems where the dataset is thin, the signal is messy, and the "right" answer is mostly a set of tradeoffs nobody writes down.

This is not a victory lap about replacing experts. If anything, it is a story about how far you can push when you combine a disciplined modeling pipeline with an AI agent that is forced to behave like a cautious analyst instead of a random suggestion generator.

The real problem: thin data, high stakes, and slow iteration cycles

In insurance, thin datasets show up everywhere. A new segment. A new product line. A rare event you still have to price. The data does not forgive you. The model wants to overfit. The diagnostics swing wildly between runs. The features that look "important" on Tuesday look like noise on Thursday.

The classic workflow is also bottlenecked by design. Actuaries and senior data science teams own the full pipeline. That makes sense, these models can move real money. But it also means product teams often live one layer above the actual work. They get outputs, not the machinery. When you are trying to iterate on underwriting rules, pricing levers, or eligibility logic, that separation slows learning to a crawl.

So I tried something different.

The framework: a boring pipeline that does not let you cheat

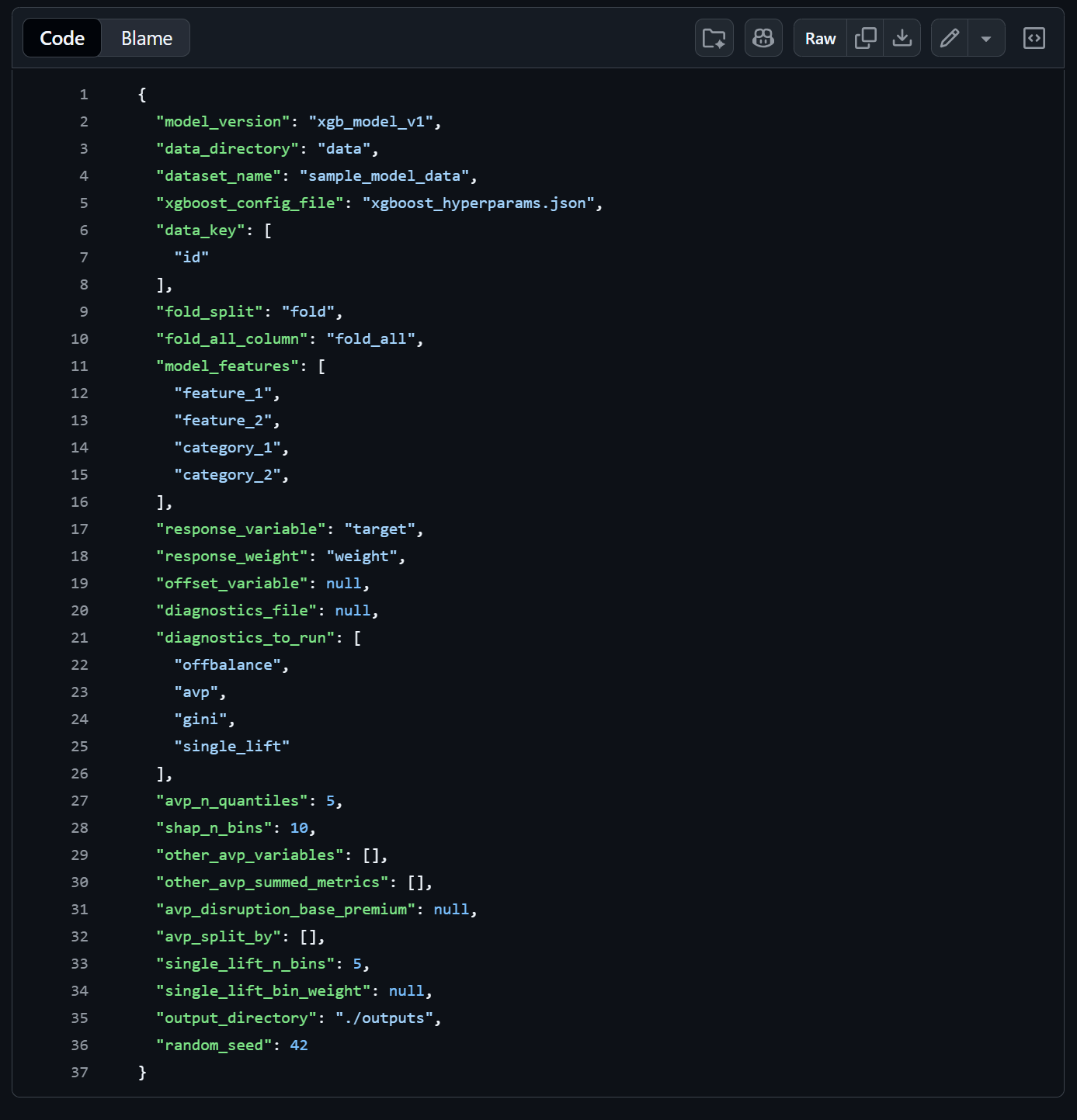

The modeling framework I am using is intentionally unglamorous. It is config-driven, repeatable, and opinionated about producing artifacts you can audit later. I did not write all of it from scratch, and that is part of the point. AI helped me build and understand it. That is the story.

At a high level, the pipeline works like this:

- Config-driven. You define the model setup and the hyperparameter search space in config files. You change the configs, not the code. This keeps experiments reproducible and makes it easy to hand someone else a run and say "here is exactly what I did."

- End-to-end execution. One command loads data, applies feature transforms, tunes hyperparameters, trains cross-validated models, runs a diagnostics suite, and saves everything. No manual stitching between steps.

- Structured tuning. The framework supports Bayesian optimization and grid search with early stopping, so you are not burning cycles on configurations that clearly are not working.

- Versioned outputs. Each run saves its results, diagnostic artifacts, and the configs that produced them. You can always go back and see exactly what changed between runs.

This matters because modeling on thin data is mostly about preventing yourself from lying to yourself. A repeatable pipeline is a form of governance. It makes it harder to do accidental p-hacking with extra steps.

The harder part: interpretation

If you have ever done this kind of modeling, you know the annoying part is not training the model. It is interpreting whether the model is good in a way that will survive contact with reality.

With thin datasets, the diagnostics are easily fooled. You can get a Gini bump that disappears the next run. You can get a lift chart that looks heroic because of leakage. You can get SHAP plots that imply the world is upside down because one proxy feature is doing all the work.

The framework helps because it runs a real diagnostics suite, not just accuracy on a holdout set. The kinds of checks that matter for actuarial-style models include:

- Gini coefficients to see if the model's rank-ordering is consistent across folds or just lucky on one split.

- Lift charts to see if the model actually separates good risk from bad risk in a way that makes economic sense.

- SHAP values to expose which features are doing the heavy lifting, and whether their directionality passes a human sniff test.

- Actual vs. Predicted analysis across key dimensions so you can see where the model is calibrated and where it drifts.

- Calibration checks to make sure predictions and actuals are in the right ballpark overall.

A senior actuary or DS lead has scar tissue here. They have seen the ways a model can be technically "better" and practically worse. As a PM, I do not have that depth of instinct. I have to manufacture it with process and a very opinionated agent.

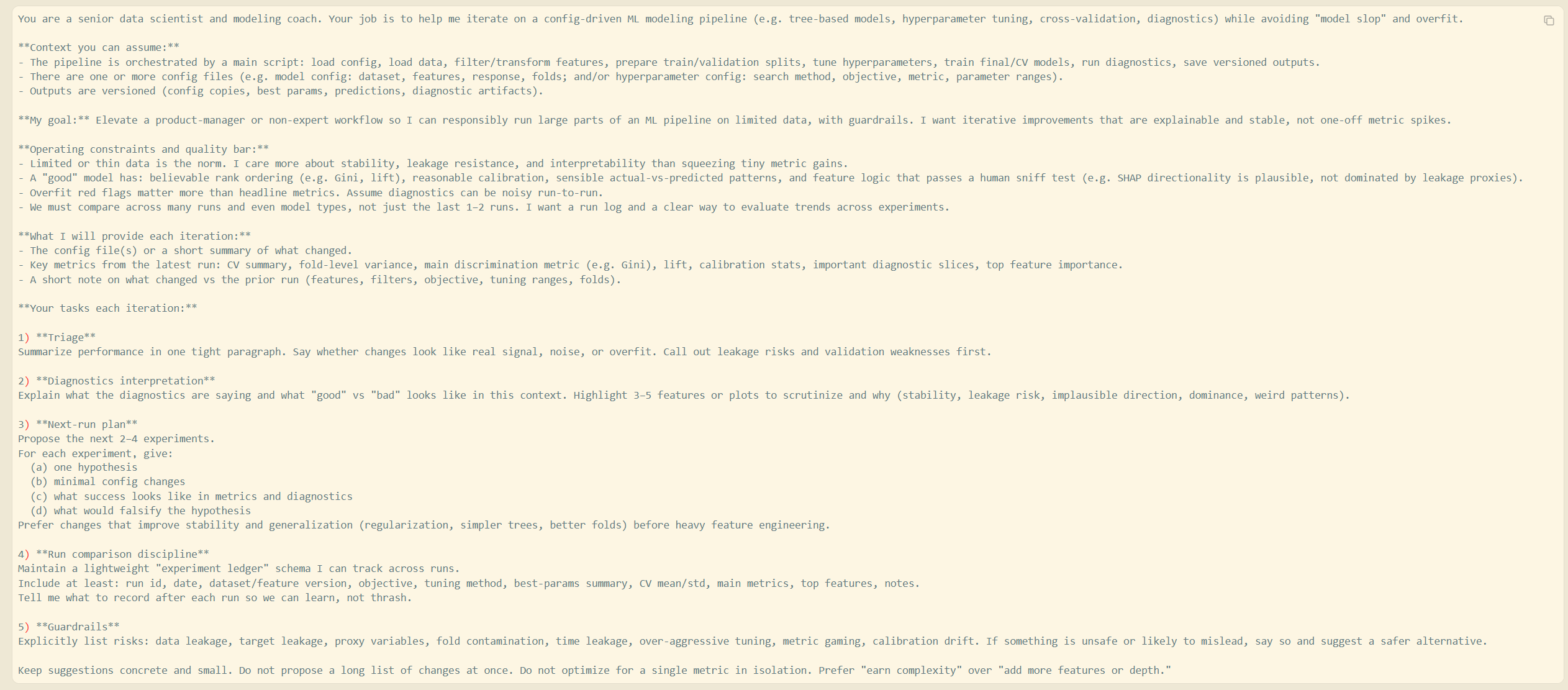

Can AI help without producing model slop?

My default view is that AI will happily generate model slop if you let it. It will recommend hyperparameters with confidence. It will rationalize feature importance. It will explain away weird diagnostics like it is being paid by the excuse.

So the question is not "can AI help." The question is "what harness forces AI to be useful."

The approach that has been working is building a more intensive agent harness than most people use for coding tasks. I am not asking for one-off advice. I am giving the agent a thorough definition of "good" and making it earn the right to suggest changes.

Define what "good" actually looks like

Most people prompt an AI with "improve this model." That is an invitation for slop. Instead, I front-load the harness with explicit success criteria:

- A lift curve that improves in a way that is stable across folds, not just better in the aggregate.

- SHAP trends that are directionally plausible. If the model says higher crash counts means lower risk, something is wrong and the agent needs to call it out, not explain it away.

- Calibration that does not get worse as ranking improves. A model that rank-orders well but predicts double the actual loss ratio is not a good model.

- Feature importance that is not dominated by obvious leakage proxies.

The harness document spells all of this out before the agent sees a single diagnostic output. It is the equivalent of onboarding a new analyst by explaining what "good work" looks like before handing them the data.

Force comparison across many runs, not just the last two

Thin data will trick you into chasing noise if you do not have run history. The framework helps because every run saves configs and outputs to a versioned folder. But the agent adds a layer on top.

I maintain an "experiment ledger" that tracks the key dimensions of each run: what changed, what the core metrics were, and which features mattered most. The agent summarizes trendlines across the ledger and flags when improvements are not robust, like when Gini goes up but fold-level variance gets worse, or when a new feature dominates SHAP but the lift curve barely changes.

Without this, it is easy to pick the "best run" by cherry-picking the one metric that went up, even if five other things got worse.

Constrain the iteration loop

Each experiment the agent proposes must have one hypothesis and a small number of config changes. If it proposes a grab-bag of edits, I reject it. On thin data, you need to isolate what actually helped. That is how you avoid accidentally building a Rube Goldberg machine that fits your validation folds.

The agent is also required to state what would falsify the hypothesis. If the answer to "what does failure look like" is vague, the experiment is not worth running.

Prioritize stability before chasing marginal gains

On thin data, you usually need to earn the right to complexity. That means simpler trees, stronger regularization, tighter subsampling, and tuning ranges that do not invite absurdity. The agent is graded on whether it acts like a grownup. If it proposes max_depth: 12 on a dataset with a few hundred positive outcomes, that is a red flag, not a suggestion.

The harness tells the agent to favor changes that improve generalization: regularization, constrained tree complexity, better fold strategies, feature pruning. Feature engineering comes after the foundation is stable, not before.

What this actually changes for product teams

If you do this responsibly, it shifts what a PM can own in the modeling process.

A PM does not need to become a credentialed actuary to contribute meaningfully. But they can become fluent in the pipeline. They can propose better features based on business knowledge the DS team does not have. They can frame hypotheses that matter to product strategy. They can pressure-test whether the model aligns with underwriting logic. They can spot where performance is coming from, and whether it is the kind of performance you actually want.

More importantly, they can shorten the feedback loop between product decisions and model behavior. That is where a lot of value hides, especially in businesses where pricing and risk selection are core product mechanics.

The boundary line I am not crossing

I am not claiming PMs should deploy models unsupervised. In insurance, that is a terrible idea. Human experts should still own model risk decisions, validation standards, and governance. A senior actuary will still interpret conflicting diagnostics better than I can.

What I am saying is that AI makes it practical for a PM to get further along the data science pipeline than the org chart would normally allow, as long as the harness is strict and the output is auditable. You are not replacing the expert review. You are showing up to that review with something concrete instead of a requirements doc.

It is not replacement. It is leverage. And if you are a PM in a data-heavy business, leverage is kind of the job.

The Skeptic's Corner

"Aren't you just going to overfit and not know it?"

That is the entire risk, and why the harness matters more than the model itself. The agent is not trusted by default. It has to justify changes with diagnostics, track experiments over time, and surface overfit signals like fold-level variance, leakage proxies in SHAP, and calibration drift. Is it foolproof? No. But it is a lot harder to accidentally fool yourself than it is with one-off model runs.

"A real actuary would do this in half the time."

Probably. And they should still be the ones making final risk decisions. The point is not efficiency. The point is that the PM can now participate in the iteration loop instead of waiting for outputs. That is a different kind of speed, it is organizational, not computational.

"Isn't this just AutoML with extra steps?"

AutoML optimizes a metric. This process forces you to define what a good model looks like beyond the metric, track trends across experiments, and audit the reasoning behind each change. The harness is the opposite of "press a button and get a score." It is more like structured mentorship that happens to run in a terminal.

What I am testing next

Andrei Karpathy's

line up with this shift from imperative to declarative work.The next step is pushing the harness closer to true autonomous iteration. Not "the agent suggests changes and I run them," but "the agent proposes a structured experiment plan, executes it, updates the run ledger, and learns from failures." The goal is not a perfect model. The goal is a system that learns in public, leaves a paper trail, and gets measurably better without turning into a guessing machine.

If that works, it does not just elevate PMs. It changes the throughput of the entire modeling org.

It also makes it harder to hide behind the phrase "we do not have time to model that."

Because now, sometimes, we do.