How I Stopped Drowning in Data Governance and Started Actually Understanding Databases

How I Stopped Drowning in Data Governance and Started Actually Understanding Databases

From weeks of manual exploration to minutes of AI-assisted comprehension

Update February 14, 2026: I added a clearer breakdown of Rules vs MCP vs Skills and where database-scanner fits.

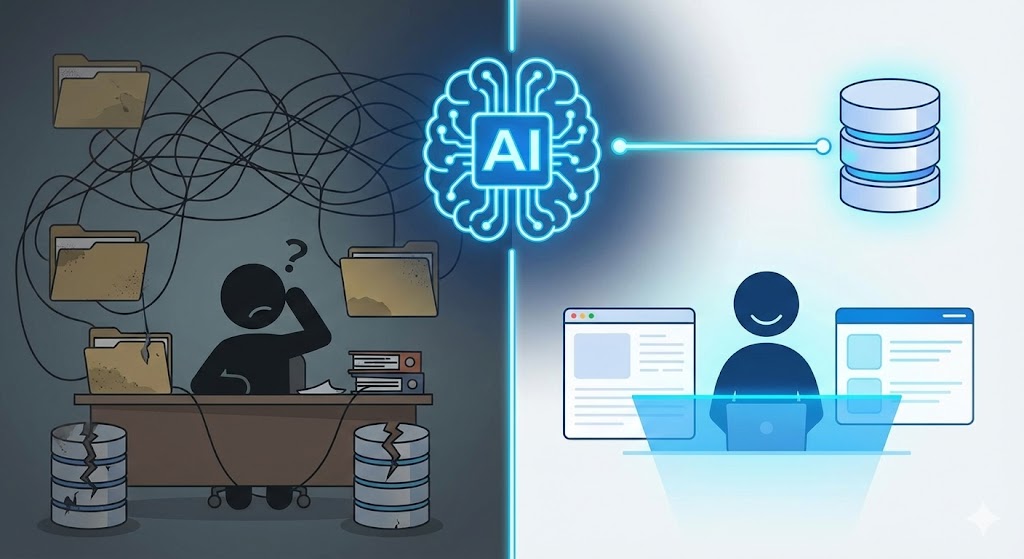

The old way was brutal

Every data professional knows the pain. You join a new team, inherit a legacy system, or get access to a database that has been evolving for years without you. Then you start the slog.

You pull up the schema. You see 47 tables. You open the data governance documentation, if it exists. It is usually out of date. You start running:

SELECT * FROM table_name LIMIT 10;

Over and over.

You squint at column names like INTERNAL_ID, ROOT_APPLICATION_ID, and CREATED_AT. Then you guess what joins to what. You build a mental model through trial and error.

Hours turn into days. Days turn into weeks. Eventually, you figure out how the data flows. You learn which tables are the golden sources. You learn which columns are still used versus legacy cruft.

Then someone asks you for a quick analysis in a completely different schema. Back to square one.

I lived this cycle for years. Manual exploration. Stale docs. Tribal knowledge. It worked, but it was slow, painful, and it did not scale.

The new way is not "a scanner." It is a system.

My first pass at fixing this was what I called a database scanner. It helped, but it was not the real breakthrough.

The real breakthrough was designing a simple operating system with three parts:

Rules

Rules are short, always-on guardrails. They define how the agent should behave and how we prevent dumb mistakes.

Rules answer questions like:

- What counts as a "trusted" metric definition here?

- What tables are canonical versus derived?

- What filters are mandatory?

- When should the agent stop and ask instead of guessing?

Rules are not a data dump. They are a decision layer.

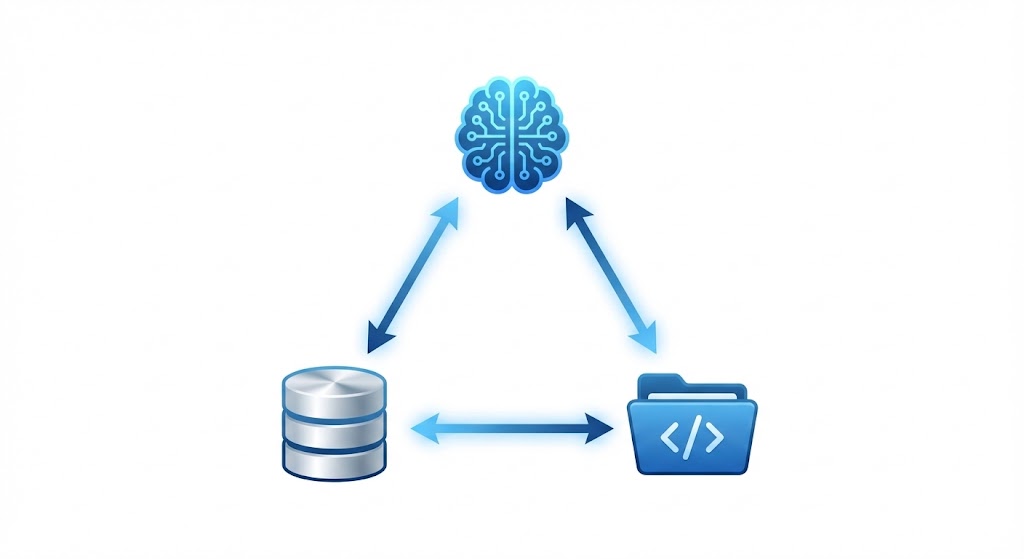

MCP (Model Context Protocol)

MCP is the pipe. It lets the agent pull facts from systems on demand.

In my setup, that is:

- Snowflake MCP for schema, sample rows, counts, and joins.

- Metabase MCP for definitions, saved questions, dashboards, and the semantic logic people actually use.

MCP is how you stop pasting screenshots and schemas into prompts. It is also how you keep the model grounded in reality.

Skills

Skills are directed workflows. They are what you run when you need multi-step synthesis, not a single answer.

Database-scanner is a skill. It is a repeatable routine that explores a domain, tests join paths, surfaces sharp edges, and produces a usable map of a schema.

That distinction matters because MCP and skills solve different problems.

ELI5: how the system works

Think of it like onboarding a new analyst.

- Rules are your team's onboarding playbook.

- MCP is letting the analyst open Snowflake and Metabase directly.

- Skills are the analyst's proven checklist for ramping quickly.

When those three work together, "understanding the database" becomes a fast loop instead of a month-long project.

Why MCP changes the game

Most AI workflows fail for one reason. People try to solve retrieval with context.

They paste a schema. They paste example SQL. They paste dashboards. The prompt becomes a landfill. Token usage spikes, and quality drops. The model starts making confident guesses because it cannot keep the ground truth in view.

MCP flips the workflow.

Instead of preloading everything, the agent retrieves exactly what it needs:

- It asks Snowflake what exists.

- It asks Metabase what the business means.

- It uses rules to avoid bad assumptions.

- It uses a skill only when it needs synthesis.

This is why MCP is also a token optimization tool. You move from "stuff context into every prompt" to "retrieve narrowly, reason clearly."

Snowflake MCP is the facts layer

Snowflake MCP is how I stop guessing.

When I ask, "what tables matter here?" the agent can:

- Pull table and column metadata.

- Sample rows to understand real values.

- Compute quick distributions.

- Test joins and uniqueness.

This eliminates the classic hallucination risk. The model is not inventing relationships from vibes. It is interrogating the actual database.

Metabase MCP is the meaning layer

Snowflake can tell you what a column is called. It cannot tell you what your company means by "active" or "retained" unless that definition exists somewhere.

In many teams, Metabase is where meaning lives:

- Metric definitions.

- Saved questions people trust.

- The filter logic that has been argued over.

- Dashboard conventions that quietly become policy.

Metabase MCP lets the agent pull that meaning on demand. It reduces the risk of writing technically correct SQL that answers the wrong business question.

This is also why "just scan the schema" is not enough. You need the facts and the meaning.

So where does database-scanner fit?

Database-scanner is a skill I use when the domain is messy and MCP alone is not enough.

It is useful when:

- A schema has multiple "almost the same" tables.

- Join keys exist but are not clean.

- There are snapshots, soft deletes, and duplicates.

- The same entity is modeled three different ways across teams.

- You need a reusable map, not a one-off query.

Database-scanner is not the default path. It is the escalation path.

The decision rule I actually use

This is the part most people skip, and it is the reason their AI setup feels unreliable.

-

Use MCP first. Ask Snowflake and Metabase for what you need. Keep it grounded. Keep it small.

-

Use rules to constrain behavior. If the model cannot verify something, it should say so. If a metric requires a filter, apply it every time.

-

Run database-scanner only when you need synthesis. If you have done a few MCP calls and still do not trust your mental model, you do not need more context. You need a directed workflow that builds a map.

That is the entire system. Retrieval, guardrails, and then synthesis.

The real power: from understanding to writing SQL

Once the agent is grounded, natural language SQL gets genuinely useful.

Instead of:

- Figuring out which tables to query.

- Guessing at join conditions.

- Testing random approaches.

- Debugging errors in a loop.

You describe what you want:

"I need the policy numbers and coverage details for all active bundles, including VIN information from nested JSON in program_data."

The agent can generate SQL with:

- The right joins based on verified relationships.

- The JSON parsing functions that match your warehouse.

- Filters that match your team's conventions.

Does it work perfectly on the first try every time? No. But the feedback loop is dramatically faster, and it is safer. The agent can see errors, correct them, and keep checking the database through MCP.

What this changes practically

For new team members

Instead of weeks of ramp-up, someone can get oriented in a single afternoon. They can retrieve definitions from Metabase, verify tables in Snowflake, and use a scanner skill only when the domain is truly confusing.

For cross-functional work

If marketing needs a dataset in a schema you have never touched, you are not blocked. You can get productive quickly, and validate as you go.

For documentation

The outputs become living docs. The difference is that they are grounded. They are built from verified tool calls, not someone's memory.

For cognitive load

This is the big one. I do not carry mental maps of 15 schemas anymore. I re-orient fast when I need to. My brain goes back to analysis, not archaeology.

Getting started

If you want to replicate this approach, think in layers.

-

Set up MCP connections. At minimum, connect Snowflake. If your org uses Metabase as the shared truth layer, connect that too.

-

Write a small rules file. Keep it short. Include definitions that must not drift, required filters, and safety constraints like read-only access.

-

Implement the database-scanner skill. Treat it like a playbook. It should output a clear schema map, likely join paths, and the sharp edges to avoid.

-

Use an agentic environment that can execute. Cursor is my default because it can write and run code in context. Any environment that supports tool calls and iteration can work.

The setup takes a few hours. After that, every new database becomes accessible in minutes instead of weeks.

The skeptic's corner

"But AI hallucinates. Won't it make up relationships that don't exist?"

It can, which is why the system matters. MCP keeps the agent grounded in Snowflake and Metabase. Rules define what it is allowed to claim. Skills provide a structured workflow instead of free-form guessing.

"What about data security?"

Valid concern. Use read-only roles. Keep API keys secure. If sensitive PII is a concern, use a sanitized replica. Apply the same security standards you would use for any programmatic database access.

"Isn't this just a fancy schema browser?"

A schema browser shows tables and columns. This system explains meaning and usage. It connects facts to definitions, then produces a map you can act on. That gap is the difference between data and understanding.

The takeaway

Data governance and manual database exploration are not going away. The time spent on them can shrink dramatically.

The workflow that actually scales is not "a scanner." It is rules, MCP, and skills working together.

Facts come from Snowflake. Meaning comes from Metabase. Synthesis comes from skills like database-scanner.

I spent years learning databases the hard way. Now I learn them the smart way. You should too.